Master Projects

Master Thesis Research Projects

The following Master thesis research projects are offered at Nikhef. If you are interested in one of these projects, please contact the coordinator listed with the project.

Projects with a 2024 start [WORK IN PROGRESS, please look below for older projects]

ALICE: Search for new physics with 4D tracking at the most sensitive vertex detector at the LHC

With the newly installed Inner Tracking System consisting fully of monolithic detectors, ALICE is very sensitive to particles with low transverse momenta, more so than ATLAS and CMS. This will be even more so for the ALICE upgrade detector in 2033. This detector could potentially be even more sensitive to longlived particles that leave peculiar tracks such as disappearing or kinked tracks in the tracker by using timing information along a track. In this project you will investigate how timing information in the different tracking layers can improve or even enable a search for new physics beyond the Standard Model in ALICE. If you show a possibility for major improvements, this can have real consequences for the choice of sensors for this ALICE inner tracker upgrade.

Contact: Jory Sonneveld and Panos Christakoglou

ALICE: Connecting the hot and cold QCD matter by searching for the strongest magnetic field in nature

In a non-central collision between two Pb ions, with a large value of impact parameter, the charged nucleons that do not participate in the interaction (called spectators) create strong magnetic fields. A back of the envelope calculation using the Biot-Savart law brings the magnitude of this filed close to 10^19Gauss in agreement with state of the art theoretical calculation, making it the strongest magnetic field in nature. The presence of this field could have direct implications in the motion of final state particles. The magnetic field, however, decays rapidly. The decay rate depends on the electric conductivity of the medium which is experimentally poorly constrained. Overall, the presence of the magnetic field, the main goal of this project, is so far not confirmed experimentally and can have implications for measurements of gravitational waves emitted from the merger of neutron stars.

Contact: Panos Christakoglou

ALICE/LHCb Tracking: Innovative tracking techniques exploting modern heterogeneous architectures

The recostruction of charged particle tracks is one of the most computationaly demanding components of modern high energy physics experiments. In particular, the upcoming High-Luminosity Large Hadron Collider (HL-LHC) makes the usage of fast tracking algorithms using modern computing architectures with many cores and accelerators essential. In this project we will be investigating innovative, machine learning, experiment agnostic tracking algorithms in modern architectures e.g. GPUs, FPGAs.

Contact: Jacco de Vries and Panos Christakoglou

ATLAS: Performing a Bell test in Higgs to di-boson decays

Recently, theorist [1] have proposed to perform a Bell test in Higgs to di-boson decays. This is a fundamental test of not only quantum mechanics but also a test of quantum field theory using the elusive scalar Higgs particle.

At Nikhef we started to brainstorm on the experimental aspects of this challenging measurement. Due to the studies of a PhD student [2] we have considerable experience in the reconstruction of Higgs rest frame angles that are essential to perform a Bell test.

Is there a master student who wants to join our efforts to study the "spooky action at a distance" in Higgs to WW decays? Please contact Peter.Kluit@nikhef.nl.

[1] Review article https://arxiv.org/pdf/2402.07972.pdf

[2] https://www.nikhef.nl/pub/services/biblio/theses_pdf/thesis_R_Aben.pdf

ATLAS: A new timing detector - the HGTD

The ATLAS is going to get a new ability: a timing detector. This allows us to reconstruct tracks not only in the 3 dimensions of space but adds the ability of measuring very precisely also the time (at picosecond level) at which the particles pass the sensitive layers of the HGTD detector. The added information helps to construct the trajectories of the particles created at the LHC in 4 dimensions and ultimately will lead to a better reconstruction of physics at ATLAS. The new HGTD detector is still in construction and work needs to be done on different levels such as understanding the detector response (taking measurements in the lab and performing simulations) or developing algorithms to reconstruct the particle trajectories (programming and analysis work).

Several projects are available within the context of the new HGTD detector:

- One can choose to either focus on the impact on physics analysis performance by studying how the timing measurements can be included in the reconstruction of tracks, and what effect this has on how much better we can understand the physical processes occurring in the particles produced in the LHC collisions. With this work you will be part of the Atlas group at Nikhef.

- The second possibility is to test the sensors in our lab and in test-beam setups at CERN/DESY. The analysis performed will be in context of the ATLAS HGTD test beam group in connection to both the Atlas group and the R&D department at Nikhef.

- The third is to contribute in an ongoing effort to precisely simulate/model the silicon avalanche detectors in the Allpix2 framework. There are several models that try to describe the detectors response. The models have depend on operation temperature, field strenghts and radiation damage. We are getting close in being able to model our detector - but not there yet. This work will be within the ATLAS group.

Contact: Hella Snoek

Dark Matter: Building better Dark Matter Detectors - the XAMS R&D Setup

The Amsterdam Dark Matter group operates an R&D xenon detector at Nikhef. The detector is a dual-phase xenon time-projection chamber and contains about 0.5kg of ultra-pure liquid xenon in the central volume. We use this detector for the development of new detection techniques - such as utilizing our newly installed silicon photomultipliers - and to improve the understanding of the response of liquid xenon to various forms of radiation. The results could be directly used in the XENONnT experiment, the world’s most sensitive direct detection dark matter experiment at the Gran Sasso underground laboratory, or for future Dark Matter experiments like DARWIN. We have several interesting projects for this facility. We are looking for someone who is interested in working in a laboratory on high-tech equipment, modifying the detector, taking data and analyzing the data themselves You will "own" this experiment.

Contact: Patrick Decowski and Auke Colijn

Dark Matter: Searching for Dark Matter Particles - XENONnT Data Analysis

The XENON collaboration has used the XENON1T detector to achieve the world’s most sensitive direct detection dark matter results and is currently operating the XENONnT successor experiment. The detectors operate at the Gran Sasso underground laboratory and consist of so-called dual-phase xenon time-projection chambers filled with ultra-pure xenon. Our group has an opening for a motivated MSc student to do analysis with the new data coming from the XENONnT detector. The work will consist of understanding the detector signals and applying a deep neural network to improve the (gas-) background discrimination in our Python-based analysis tool to improve the sensitivity for low-mass dark matter particles. The work will continue a study started by a recent graduate. There will also be opportunity to do data-taking shifts at the Gran Sasso underground laboratory in Italy.

Contact: Patrick Decowski and Auke Colijn

Dark Matter: Signal reconstruction and correction in XENONnT

XENONnT is a low background experiment operating at the INFN - Gran Sasso underground laboratory with the main goal of detecting Dark Matter interactions with xenon target nuclei. The detector, consisting of a dual-phase time projection chamber, is filled with ultra-pure xenon, which acts as a target and detection medium. Understanding the detector's response to various calibration sources is a mandatory step in exploiting the scientific data acquired. This MSc thesis aims to develop new methods to improve the reconstruction and correction of scintillation/ ionization signals from calibration data. The student will work with modern analysis techniques (python-based) and will collaborate with other analysts within the international XENON Collaboration.

Contact: Maxime Pierre, Patrick Decowski

Dark Matter: The Ultimate Dark Matter Experiment - DARWIN Sensitivity Studies

DARWIN is the “ultimate” direct detection dark matter experiment, with the goal to reach the so-called “neutrino floor”, when neutrinos become a hard-to-reduce background. The large and exquisitely clean xenon mass will allow DARWIN to also be sensitive to other physics signals such as solar neutrinos, double-beta decay from Xe-136, axions and axion-like particles etc. While the experiment will only start in 2027, we are in the midst of optimizing the experiment, which is driven by simulations. We have an opening for a student to work on the GEANT4 Monte Carlo simulations for DARWIN. We are also working on a “fast simulation” that could be included in this framework. It is your opportunity to steer the optimization of a large and unique experiment. This project requires good programming skills (Python and C++) and data analysis/physics interpretation skills.

Contact: Tina Pollmann, Patrick Decowski or Auke Colijn

Dark Matter: Exploring new background sources for DARWIN

Experiments based on the xenon dual-phase time projection chamber detection technology have already demonstrated their leading role in the search for Dark Matter. The unprecedented low level of background reached by the current generation, such as XENONnT, allows such experiments to be sensitive to new rare-events physics searches, broadening their physics program. The next generation of experiments is already under consideration with the DARWIN observatory, which aims to surpass its predecessors in terms of background level and mass of xenon target. With the increased sensitivity to new physics channels, such as the study of neutrino properties, new sources of backgrounds may arise. This MSc thesis aims to investigate potential new sources of background for DARWIN and is a good opportunity for the student to contribute to the design of the experiment. This project will rely on Monte Carlo simulation tools such as GEANT4 and FLUKA, and good programming skills (Python and C++) are advantageous.

Contact: Maxime Pierre, Patrick Decowski

Dark Matter: Sensitive tests of wavelength-shifting properties of materials for dark matter detectors

Rare event search experiments that look for neutrino and dark matter interactions are performed with highly sensitive detector systems, often relying on scintillators, especially liquid noble gases, to detect particle interactions. Detectors consist of structural materials that are assumed to be optically passive, and light detection systems that use reflectors, light detectors, and sometimes, wavelength-shifting materials. MSc theses are available related to measuring the efficiency of light detection systems that might be used in future detectors. Furthermore, measurements to ensure that presumably passive materials do not fluoresce, at the low level relevant to the detectors, can be done. Part of the thesis work can include Monte Carlo simulations and data analysis for current and upcoming dark matter detectors, to study the effect of different levels of desired and nuisance wavelength shifting. In this project, students will acquire skills in photon detection, wavelength shifting technologies, vacuum systems, UV and extreme-UV optics, detector design, and optionally in Python and C++ programming, data analysis, and Monte Carlo techniques.

Contact: Tina Pollmann

Detector R&D: Energy Calibration of hybrid pixel detector with the Timepix4 chip

The Large Hadron Collider at CERN will increase its luminosity in the coming years. For the LHCb experiment the number of collisions per bunch crossing increases from 7 to more than 40. To distinguish all tracks from the quasi simultaneous collisions, time information will have to be used in addition to spatial information. A big step on the way to fast silicon detectors is the recently developed Timepix4 ASIC. Timepix4 consist of 448x512 pixels, but the pixels are not identical and there are pixel to pixel fluctuations in the time and charge measurement. The ultimate time resolution can only be achieved after calibration of both the time and energy measurements. The goal of this project is to study the energy calibration of Timepix4. Typical research questions are: how does the resolution depend on threshold and Krummenacher (discharge) current, and does a different sensor affect the energy resolution? In this research you will do measurements with calibration pulses, lasers and with radio-active sources to obtain data to calibrate the detector. The work consist of hands-on work in the lab to build/adapt the test set-up, and analysis of the data obtained.

Contact: Daan Oppenhuis,Hella Snoek,

Detector R&D: Studies of wafer-scale sensors for ALICE detector upgrade and beyond

One of the biggest milestones of the ALICE detector upgrade (foreseen in 2026) is the implementation of wafer-scale (~ 28 cm x 18 cm) monolithic silicon active pixel sensors in the tracking detector, with the goal of having truly cylindrical barrels around the beam pipe. To demonstrate such an unprecedented technology in high energy physics detectors, few chips will be soon available in Nikhef laboratories for testing and characterization purposes. The goal of the project is to contribute to the validation of the samples against the ALICE tracking detector requirements, with a focus on timing performance in view of other applications in future high energy physics experiments beyond ALICE. We are looking for a student with a focus on lab work and interested in high precision measurements with cutting-edge instrumentation. You will be part of the Nikhef Detector R&D group and you will have, at the same time, the chance to work in an international collaboration where you will report about the performance of these novel sensors. There may even be the opportunity to join beam tests at CERN or DESY facilities. Besides interest in hardware, some proficiency in computing is required (Python or C++/ROOT).

Contact: Jory Sonneveld

Detector R&D: Time resolution of monolithic silicon detectors

Monolithic silicon detectors based on industrial Complementary Metal Oxide Semiconductor (CMOS) processes offer a promising approach for large scale detectors due to their ease of production and low material budget. Until recently, their low radiation tolerance has hindered their applicability in high energy particle physics experiments. However, new prototypes ~~such as the one in this project~~ have overcome these hurdles, making them feasible candidates for future experiments in high energy particle physics. Achieving the required radiation tolerance has brought the spatial and temporal resolution of these detectors to the forefront. In this project, you will investigate the temporal performance of a radiation hard monolithic detector prototype, using laser setups in the laboratory. You will also participate in meetings with the international collaboration working on this detector, where you will report on the prototype's performance. Depending on the progress of the work, there may be a chance to participate in test beams performed at the CERN accelerator complex and a first full three dimensional characterization of the prototypes performance using a state-of-the-art two-photon absorption laser setup at Nikhef. This project is looking for someone interested in working hands on with cutting edge detector and laser systems at the Nikhef laboratory. Python programming skills and linux experience are an advantage.

Contact: Jory Sonneveld, Uwe Kraemer

Detector R&D: Improving a Laser Setup for Testing Fast Silicon Pixel Detectors

For the upgrades of the innermost detectors of experiments at the Large Hadron Collider in Geneva, in particular to cope with the large number of collisions per second from 2027, the Detector R&D group at Nikhef tests new pixel detector prototypes with a variety of laser equipment with several wavelengths. The lasers can be focused down to a small spot to scan over the pixels on a pixel chip. Since the laser penetrates the silicon, the pixels will not be illuminated by just the focal spot, but by the entire three dimensional hourglass or double cone like light intensity distribution. So, how well defined is the volume in which charge is released? And can that be made much smaller than a pixel? And, if so, what would the optimum focus be? For this project the student will first estimate the intensity distribution inside a sensor that can be expected. This will correspond to the density of released charge within the silicon. To verify predictions, you will measure real pixel sensors for the LHC experiments. This project will involve a lot of 'hands on work' in the lab and involve programming and work on unix machines.

Contact: Martin Fransen

Detector R&D: Time resolution of hybrid pixel detectors with the Timepix4 chip

Precise time measurements with silicon pixel detectors are very important for experiments at the High-Luminosity LHC and the future circular collider. The spatial resolution of current silicon trackers will not be sufficient to distinguish the large number of collisions that will occur within individual bunch crossings. In a new method, typically referred to as 4D tracking, spatial measurements of pixel detectors will be combined with time measurements to better distinguish collision vertices that occur close together. New sensor technologies are being explored to reach the required time measurement resolution of tens of picoseconds, and the results are promising. However, the signals that these pixelated sensors produce have to be processed by front-end electronics, which hence play a large role in the total time resolution of the detector. The front-end electronics has many parameters that can be optimised to give the best time resolution for a specific sensor type. In this project you will be working with the Timepix4 chip, which is a so-called application specific integrated circuit (ASIC) that is designed to read out pixelated sensors. This ASIC is used extensively in detector R&D for the characterisation of new sensor technologies requiring precise timing (< 50 ps). To study the time resolution you will be using laser setups in our lab, and there might be an opportunity to join a test with charged particle beams at CERN. These measurements will be complemented with data from the built-in calibration-pulse mechanism of the Timepix4 ASIC. Your work will enable further research performed with this ASIC, and serve as input to the design and operation of future ASICs for experiments at the High-Luminosity LHC.

Contact: Kevin Heijhoff and Martin van Beuzekom

Detector R&D: Performance studies of Trench Isolated Low Gain Avalanche Detectors (TI-LGAD)

The future vertex detector of the LHCb Experiment needs to measure the spatial coordinates and time of the particles originating in the LHC proton-proton collisions with resolutions better than 10 um and 50 ps, respectively. Several technologies are being considered to achieve these resolutions. Among those is a novel sensor technology called Trench Isolated Low Gain Avalanche Detector. Prototype pixelated sensors have been manufactured recently and have to be characterised. Therefore these new sensors will be bump bonded to a Timepix4 ASIC which provides charge and time measurements in each of 230 thousand pixels. Characterisation will be done using a lab setup at Nikhef, and includes tests with a micro-focused laser beam, radioactive sources, and possibly with particle tracks obtained in a test-beam. This project involves data taking with these new devices and analysing the data to determine the performance parameters such as the spatial and temporal resolution. as function of temperature and other operational conditions.

Contacts: Kazu Akiba and Martin van Beuzekom

Detector R&D: A Telescope with Ultrathin Sensors for Beam Tests

To measure the performance of new prototypes for upgrades of the LHC experiments and beyond, typically a telescope is used in a beam line of charged particles that can be used to compare the results in the prototype to particle tracks measured with this telescope. In this project, you will continue work on a very lightweight, compact telescope using ALICE PIxel DEtectors (ALPIDEs). This includes work on the mechanics, data acquisition software, and a moveable stage. You will foreseeably test this telescope in the Delft Proton Therapy Center. If time allows, you will add a timing plane and perform a measurement with one of our prototypes. Apart from travel to Delft, there is a possiblity to travel to other beam line facilities.

Contact: Jory Sonneveld

Detector R&D: Laser Interferometer Space Antenna (LISA) - the first gravitational wave detector in space

The space-based gravitational wave antenna LISA is one of the most challenging space missions ever proposed. ESA plans to launch around 2034 three spacecraft separated by a few million kilometres. This constellation measures tiny variations in the distances between test-masses located in each satellite to detect gravitational waves from sources such as supermassive black holes. LISA is based on laser interferometry, and the three satellites form a giant Michelson interferometer. LISA measures a relative phase shift between one local laser and one distant laser by light interference. The phase shift measurement requires sensitive sensors. The Nikhef DR&D group fabricated prototype sensors in 2020 together with the Photonics industry and the Dutch institute for space research SRON. Nikhef & SRON are responsible for the Quadrant PhotoReceiver (QPR) system: the sensors, the housing including a complex mount to align the sensors with 10's of nanometer accuracy, various environmental tests at the European Space Research and Technology Centre (ESTEC), and the overall performance of the QPR in the LISA instrument. Currently we are discussing possible sensor improvements for a second fabrication run in 2022, optimizing the mechanics and preparing environmental tests. As a MSc student, you will work on various aspects of the wavefront sensor development: study the performance of the epitaxial stacks of Indium-Gallium-Arsenide, setting up test benches to characterize the sensors and QPR system, performing the actual tests and data analysis, in combination with performance studies and simulations of the LISA instrument.

Contact: Niels van Bakel

Detector R&D: Other projects

Are you looking for a slightly different project? Are the above projects already taken? Are you coming in at an unusual time of the year? Do not hesitate to contact us! We always have new projects coming up at different times in the year and we are open to your ideas.

Contact: Jory Sonneveld

Gravitational Waves: Computer modelling to design the laser interferometers for the Einstein Telescope

A new field of instrument science led to the successful detection of gravitational waves by the LIGO detectors in 2015. We are now preparing the next generation of gravitational wave observatories, such as the Einstein Telescope, with the aim to increase the detector sensitivity by a factor of ten, which would allow, for example, to detect stellar-mass black holes from early in the universe when the first stars began to form. This ambitious goal requires us to find ways to significantly improve the best laser interferometers in the world.

Gravitational wave detectors complex Michelson-type interferometers enhanced with optical cavities. We develop and use numerical models to study these laser interferometers, to invent new optical techniques and to quantify their performance. For example, we synthesize virtual mirror surfaces to study the effects of higher-order optical modes in the interferometers, and we use opto-mechanical models to test schemes for suppressing quantum fluctuations of the light field. We can offer several projects based on numerical modelling of laser interferometers. All projects will be directly linked to the ongoing design of the Einstein Telescope.

Contact: Andreas Freise

Gravitational-Waves: Get rid of those damn vibrations!

In 2015 large scale, precision interferometry led to the detection of gravitational-waves. In 2017 Europe’s Advanced Virgo detector joined this international network and the best studied astrophysical event in history, GW170817, was detected in both gravitational waves and across the electromagnetic spectrum.

The Nikhef gravitational wave group is actively contributing to improvements towards current gravitational-wave detectors and the rapidly maturing design for Europe’s next generation of gravitational-wave observatory, Einstein Telescope, with one of two candidate sites located in the Netherlands. These detectors will unveil the gravitational symphony of the dark universe out to cosmological distances. Breaking past the sensitivity achieved by the current observatories will require a radically new approach to core components of these state of the art machines. This is especially true at the lowest, audio-band, frequencies that the Einstein Telescope is targeting where large improvements are needed.

Our project, Omnisens, brings the techniques from space based satellite control back to Earth building a platform capable of actively cancelling ground vibrations to levels never reached in the past. This is realised with state of the art compact interferometric sensors and precision mechanics. Substantial cancellation of seismic motion is an essential improvement for the Einstein Telescope, to reach below attometer (10-18 m) displacements.

We are excited to offer two projects in 2024:

- You will experimentally demonstrate and optimise Omnisens’ novel vibration isolation for future deployment on the Einstein Telescope. The activity will involve hands-on experience with laser, electronics mechanical and high-vacuum systems.

- You will contribute to the design of the Einstein Telescope by modelling the coupling of seismic and technical noises (such as actuation and sensing noises) through different configurations of seismic actuation chains. An accurate modelling of the origin and transmission of those noises is crucial in designing a system that prevents them from limiting the interferometer’s readout.

Contact: Conor Mow-Lowry

Theoretical Particle Physics: High-energy neutrino physics at the LHC

High-energy collisions at the LHC and its High-Luminosity upgrade (HL-LHC) produce a large number of particles along the beam collision axis, outside of the acceptance of existing experiments. The FASER experiment has in 2023, for the first team, detected neutrinos produced in LHC collisions, and is now starting to elucidate their properties. In this context, the proposed Forward Physics Facility (FPF) to be located several hundred meters from the ATLAS interaction point and shielded by concrete and rock, will host a suite of experiments to probe Standard Model (SM) processes and search for physics beyond the Standard Model (BSM). High statistics neutrino detection will provide valuable data for fundamental topics in perturbative and non-perturbative QCD and in weak interactions. Experiments at the FPF will enable synergies between forward particle production at the LHC and astroparticle physics to be exploited. The FPF has the promising potential to probe our understanding of the strong interactions as well as of proton and nuclear structure, providing access to both the very low-x and the very high-x regions of the colliding protons. The former regime is sensitive to novel QCD production mechanisms, such as BFKL effects and non-linear dynamics, as well as the gluon parton distribution function (PDF) down to x=1e-7, well beyond the coverage of other experiments and providing key inputs for astroparticle physics. In addition, the FPF acts as a neutrino-induced deep-inelastic scattering (DIS) experiment with TeV-scale neutrino beams. The resulting measurements of neutrino DIS structure functions represent a valuable handle on the partonic structure of nucleons and nuclei, particularly their quark flavour separation, that is fully complementary to the charged-lepton DIS measurements expected at the upcoming Electron-Ion Collider (EIC).

In this project, the student will carry out updated predictions for the neutrino fluxes expected at the FPF, assess the precision with which neutrino cross-sections will be measured, develop novel monte carlo event generation tools for high-energy neutrino scattering, and quantify their impact on proton and nuclear structure by means of machine learning tools within the NNPDF framework and state-of-the-art calculations in perturbative Quantum Chromodynamics. This project contributes to ongoing work within the FPF Initiative towards a Conceptual Design Report (CDR) to be presented within two years. Topics that can be considered as part of this project include the assessment of to which extent nuclear modifications of the free-proton PDFs can be constrained by FPF measurements, the determination of the small-x gluon PDF from suitably defined observables at the FPF and the implications for ultra-high-energy particle astrophysics, the study of the intrinsic charm content in the proton and its consequences for the FPF physics program, and the validation of models for neutrino-nucleon cross-sections in the region beyond the validity of perturbative QCD.

References: https://arxiv.org/abs/2203.05090, https://arxiv.org/abs/2109.10905 ,https://arxiv.org/abs/2208.08372 , https://arxiv.org/abs/2201.12363 , https://arxiv.org/abs/2109.02653, https://github.com/NNPDF/ see also this project description.

Contacts: Juan Rojo

Theoretical Particle Physics: Unravelling proton structure with machine learning

At energy-frontier facilities such as the Large Hadron Collider (LHC), scientists study the laws of nature in their quest for novel phenomena both within and beyond the Standard Model of particle physics. An in-depth understanding of the quark and gluon substructure of protons and heavy nuclei is crucial to address pressing questions from the nature of the Higgs boson to the origin of cosmic neutrinos. The key to address some of these questions is carrying out a global analysis of nucleon structure by combining an extensive experimental dataset and cutting-edge theory calculations. Within the NNPDF approach, this is achieved by means of a machine learning framework where neural networks parametrise the underlying physical laws while minimising ad-hoc model assumptions. In addition to the LHC, the upcoming Electron Ion Collider (EIC), to start taking data in 2029, will be the world's first ever polarised lepton-hadron collider and will offer a plethora of opportunities to address key open questions in our understanding of the strong nuclear force, such as the origin of the mass and the intrinsic angular momentum (spin) of hadrons and whether there exists a state of matter which is entirely dominated by gluons.

In this project, the student will develop novel machine learning and AI approaches aimed to improve global analyses of proton structure and better predictions for the LHC, the EIC, and astroparticle physics experiments. These new approaches will be implemented within the machine learning tools provided by the NNPDF open-source fitting framework and use state-of-the-art calculations in perturbative Quantum Chromodynamics. Techniques that will be considered include normalising flows, graph neural networks, gaussian processes, and kernel methods for unsupervised learning. Particular emphasis will be devoted to the automated determination of model hyperparameters, as well as to the estimate of the associated model uncertainties and their systematic validation with a battery of statistical tests. The outcome of the project will benefit the ongoing program of high-precision theory predictions for ongoing and future experiments in particle physics.

References: https://arxiv.org/abs/2201.12363, https://arxiv.org/abs/2109.02653 , https://arxiv.org/abs/2103.05419, https://arxiv.org/abs/1404.4293 , https://inspirehep.net/literature/1302398, https://github.com/NNPDF/ see also this project description.

Contacts: Juan Rojo

Neutrinos: Neutrino Oscillation Analysis with the KM3NeT/ORCA Detector

The KM3NeT/ORCA neutrino detector at the bottom of the Mediterranean Sea is able to detect oscillations of atmospheric neutrinos. Neutrinos traversing the detector are reconstructed as a function of two observables: the neutrino energy and the neutrino direction. In order to improve the neutrino oscillation analysis, we need to add one more observable, the so-called Björken-y, that indicates the fraction of the energy transferred from the incoming neutrino to its daughter particle. For this project, we will study simulated and real reconstructed data and use those to implement this additional observable in the existing analysis framework. Subsequently, we will study how much the sensitivity of the final analysis improves as a result.

C++ and Python programming skills are advantageous.

Contacts: Daan van Eijk, Paul de Jong

Neutrinos: Searching for neutrinos of cosmic origin with KM3NeT

KM3NeT is a neutrino telescope under construction in the Mediterranean Sea, already taking data with the first deployed detection units. In particular the KM3NeT/ARCA detector off-shore of Sicily is designed for high-energy neutrinos and is suited for the detection of neutrinos of cosmic origin. In this project we will use the first KM3NeT data to search for evidence of a cosmic neutrino source, and also study ways to improve the analysis.

Contact: Aart Heijboer

Neutrinos: the Deep Underground Neutrino Experiment (DUNE)

The Deep Underground Neutrino Experiment (DUNE) is under construction in the USA, and will consist of a powerful neutrino beam originating at Fermilab, a near detector at Fermilab, and a far detector in the SURF facility in Lead, South Dakota, 1300 km away. During travelling, neutrinos oscillate and a fraction of the neutrino beam changes flavour; DUNE will determine the neutrino oscillation parameters to unrivaled precision, and try and make a first detection of CP-violation in neutrinos. In this project, various elements of DUNE can be studied, including the neutrino oscillation fit, neutrino physics with the near detector, event reconstruction and classification (including machine learning), or elements of data selection and triggering.

Contact: Paul de Jong

Cosmic Rays: Energy loss profile of cosmic ray muons in the KM3NeT neutrino detector

The dominant signal in the KM3NeT detectors are not neutrinos, but muons created in particle cascades -extensive air-showers- initiated when cosmic rays interact in the top of the atmosphere. While these muons are a background for neutrino studies, they present an opportunity to study the nature of cosmic rays and hadronic interactions at the highest energies. Reconstruction algorithms are used to determine the properties of the particle interactions, normally of neutrinos, from the recorded photons. The aim of this project is to explore the possibility to reconstruct the longitudinal energy loss profile of single and multiple simultaneous muons ('bundles') originating from cosmic ray interactions. The potential to use this energy loss profile to extract information on the amount of muons and the lateral extension of the muon 'bundles' will also be explored. These properties allow to extract information on the high-energy interactions of cosmic rays.

Contact: Ronald Bruijn

Projects with a 2023 start

ALICE: The next-generation multi-purpose detector at the LHC

This main goal of this project is to focus on the next-generation multi-purpose detector planned to be built at the LHC. Its core will be a nearly massless barrel detector consisting of truly cylindrical layers based on curved wafer-scale ultra-thin silicon sensors with MAPS technology, featuring an unprecedented low material budget of 0.05% X0 per layer, with the innermost layers possibly positioned inside the beam pipe. The proposed detector is conceived for studies of pp, pA and AA collisions at luminosities a factor of 20 to 50 times higher than possible with the upgraded ALICE detector, enabling a rich physics program ranging from measurements with electromagnetic probes at ultra-low transverse momenta to precision physics in the charm and beauty sector.

Contact: Panos Christakoglou and Alessandro Grelli and Marco van Leeuwen

ALICE: Searching for the strongest magnetic field in nature

In a non-central collision between two Pb ions, with a large value of impact parameter, the charged nucleons that do not participate in the interaction (called spectators) create strong magnetic fields. A back of the envelope calculation using the Biot-Savart law brings the magnitude of this filed close to 10^19Gauss in agreement with state of the art theoretical calculation, making it the strongest magnetic field in nature. The presence of this field could have direct implications in the motion of final state particles. The magnetic field, however, decays rapidly. The decay rate depends on the electric conductivity of the medium which is experimentally poorly constrained. Overall, the presence of the magnetic field, the main goal of this project, is so far not confirmed experimentally.

Contact: Panos Christakoglou

ALICE: Looking for parity violating effects in strong interactions

Within the Standard Model, symmetries, such as the combination of charge conjugation (C) and parity (P), known as CP-symmetry, are considered to be key principles of particle physics. The violation of the CP-invariance can be accommodated within the Standard Model in the weak and the strong interactions, however it has only been confirmed experimentally in the former. Theory predicts that in heavy-ion collisions, in the presence of a deconfined state, gluonic fields create domains where the parity symmetry is locally violated. This manifests itself in a charge-dependent asymmetry in the production of particles relative to the reaction plane, what is called the Chiral Magnetic Effect (CME). The first experimental results from STAR (RHIC) and ALICE (LHC) are consistent with the expectations from the CME, however further studies are needed to constrain background effects. These highly anticipated results have the potential to reveal exiting, new physics.

Contact: Panos Christakoglou

ALICE: Machine learning techniques as a tool to study the production of heavy flavour particles

There was recently a shift in the field of heavy-ion physics triggered by experimental results obtained in collisions between small systems (e.g. protons on protons). These results resemble the ones obtained in collisions between heavy ions. This consequently raises the question of whether we create the smallest QGP droplet in collisions between small systems. The main objective of this project will be to study the production of charm particles such as D-mesons and Λc-baryons in pp collisions at the LHC. This will be done with the help of a new and innovative technique which is based on machine learning (ML). The student will also extend the studies to investigate how this production rate depends on the event activity e.g. on how many particles are created after every collision.

Contact: Panos Christakoglou and Alessandro Grelli

ALICE: Search for new physics with 4D tracking at the most sensitive vertex detector at the LHC

With the newly installed Inner Tracking System consisting fully of monolithic detectors, ALICE is very sensitive to particles with low transverse momenta, more so than ATLAS and CMS. This will be even more so for the ALICE upgrade detector in 2033. This detector could potentially be even more sensitive to longlived particles that leave peculiar tracks such as disappearing or kinked tracks in the tracker by using timing information along a track. In this project you will investigate how timing information in the different tracking layers can improve or even enable a search for new physics beyond the Standard Model in ALICE. If you show a possibility for major improvements, this can have real consequences for the choice of sensors for this ALICE inner tracker upgrade.

Contact: Jory Sonneveld and Panos Christakoglou

ATLAS: The Higgs boson's self-coupling

The coupling of the Higgs boson to itself is one of the main unobserved interactions of the Standard Model and its observation is crucial to understand the shape of the Higgs potential. Here we propose to study the 'ttHH' final state: two top quarks and two Higgs bosons produced in a single collision. This topology is yet unexplored at the ATLAS experiment and the project consists of setting up the new analysis (including multivariate analysis techniques to recognise the complicated final state), optimising the sensitivity and including the result in the full ATLAS study of the Higgs boson's coupling to itself. With the LHC data from the upcoming Run-3, we might be able to see its first glimpses!

Contact: Tristan du Pree and Carlo Pandini

ATLAS: Triple-Higgs production as a probe of the Higgs potential

So far, the investigation of Higgs self-couplings (the coupling of the Higgs boson to itself) at the LHC has focused on the measurement of the Higgs tri-linear coupling λ3 mainly through direct double-Higgs production searches. In this research project we propose the investigation of Higgs tri-linear and quartic coupling parameters λ3 and λ4, via a novel measurement of triple-Higgs production at the LHC (HHH) with the ATLAS experiment. While in the SM these parameters are expected to be identical, only a combined measurement can provide an answer regarding how the Higgs potential is realised in Nature. Processes in which three Higgs bosons are produced simultaneously are extremely rare, and very difficult to measure and disentangle from background. In this project we plan to investigate different decay channels (to bottom quarks and tau leptons), and to study advanced machine learning techniques to reconstruct such a complex hadronic final state. This kind of processes is still quite unexplored in ATLAS, and the goal of this project is to put the basis for the first measurement of HHH production at the LHC.

Furthermore, we'd like to study the possible implication of a precise measurement of the self-coupling parameters from HHH production from a phenomenological point of view: what could be the impact of a deviation in the HHH measurements on the big open questions in physics (for instance, the mechanisms at the root of baryogenesis)?

Contact: Tristan du Pree and Carlo Pandini

ATLAS: The Next Generation

After the observation of the coupling of Higgs bosons to fermions of the third generation, the search for the coupling to fermions of the second generation is one of the next priorities for research at CERN's Large Hadron Collider. The search for the decay of the Higgs boson to two charm quarks is very new [1] and we see various opportunities for interesting developments. For this project we propose improvements in reconstruction (using exclusive decays) and advanced analysis techiques (using deep learning methods).

[1]https://atlas.cern/updates/briefing/charming-Higgs-decay

Contact: Tristan du Pree

ATLAS: Searching for new particles in very energetic diboson production

The discovery of new phenomena in high-energy proton–proton collisions is one of the main goals of the Large Hadron Collider (LHC). New heavy particles decaying into a pair of vector bosons (WW, WZ, ZZ) are predicted in several extensions to the Standard Model (e.g. extended gauge-symmetry models, Grand Unified theories, theories with warped extra dimensions, etc). In this project we will investigate new ideas to look for these resonances in promising regions. We will focus on final states where both vector bosons decay into quarks, or where one decays into quarks and one into leptons. These have the potential to bring the highest sensitivity to the search for Beyond the Standard Model physics [1, 2]. We will try to reconstruct and exploit new ways to identify vector bosons (using machine learning methods) and then tackle the problem of estimating contributions from beyond the Standard Model processes in the tails of the mass distribution.

[1] https://arxiv.org/abs/1906.08589

[2] https://arxiv.org/abs/2004.14636

Contact: Flavia de Almeida Dias, Robin Hayes, Elizaveta Cherepanova and Dylan van Arneman

ATLAS: Top-quark and Higgs-boson analysis combination, and Effective Field Theory interpretation (also in 2023)

We are looking for a master student with interest in theory and data-analysis in the search for physics beyond the Standard Model in the top-quark and Higgs-boson sectors.

Your master-project starts just at the right time for preparing the Run-3 analysis of the ATLAS experiment at the LHC. In Run-3 (2022-2026), three times more data becomes available, enabling analysis of rare processes with innovative software tools and techniques.

This project aims to explore the newest strategy to combine the top-quark and Higgs-boson measurements in the perspective of constraining the existence of new physics beyond the Standard Model (SM) of Particle Physics. We selected the pp->tZq and gg->HZ processes as promising candidates for a combination to constrain new physics in the context of Standard Model Effective Field Theory (SMEFT). SMEFT is the state-of-the-art framework for theoretical interpretation of LHC data. In particular, you will study the SMEFT OtZ and Ophit operators, which are not well constrained by current measurements.

Besides affinity with particle physics theory, the ideal candidate for this project has developed python/C++ skills and is eager to learn advanced techniques. You start with a simulation of the signal and background samples using existing software tools. Then, an event selection study is required using Machine Learning techniques. To evaluate the SMEFT effects, a fitting procedure based on the innovative Morphing technique is foreseen, for which the basic tools in the ROOT and RooFit framework are available. The work is carried out in the ATLAS group at Nikhef and may lead to an ATLAS note.

Contact: Oliver Rieger and Marcel Vreeswijk

ATLAS: Machine learning to search for very rare Higgs decays

Since the Higgs boson discovery in 2012 at the ATLAS experiment, the investigation of the properties of the Higgs boson has been a priority for research at the Large Hadron Collider (LHC). However, there are still a many open questions: Is the Higgs boson the only origin of Electroweak Symmetry Breaking? Is there a mechanism which can explain the observed mass pattern of SM particles? Many of these questions are linked to the Higgs boson coupling structure.

While the Higgs boson coupling to fermions of the third generation has been established experimentally, the investigation of the Higgs boson coupling to the light fermions of the second generation will be a major project for the upcoming data-taking period (2022-2025). The Higgs boson decay to muons is the most sensitive channel for probing this coupling. In this project, you will optimize the event selection for Higgs boson decays to muons in the Vector Boson Fusion (VBF) production channel with a focus on distinguishing signal events from background processes like Drell-Yan and electroweak Z boson production. For this purpose, you will develop, implement and validate advanced machine learning and deep learning algorithms.

Contact: Oliver Rieger and Wouter Verkerke and Peter Kluit

ATLAS: Interpretation of experimental data using SMEFT

The Standard Model Effective Field Theory (SMEFT) provides a systematic approach to test the impact of new physics at the energy scale of the LHC through higher-dimensional operators. The interpretation of experimental data using SMEFT requires a particular interest in solving complex technical challenges, advanced statistical techniques, and a deep understanding of particle physics. We would be happy to discuss different project opportunities based on your interests with you.

Contact: Oliver Rieger and Wouter Verkerke

ATLAS: Reconstructing tracks from particle physics detector hits with state-of-the-art machine learning techniques

This project concerns the application of new machine learning techniques to tackle the problem of track reconstruction at the ATLAS detector in CERN. While algorithms to construct particle tracks from low-level detector information such as particle hits and timestamps have been around for decades, recent developments in the field of machine learning open up new opportunities to improve these algorithms significantly. Some recent developments that could help in this context include graph-based neural networks, which embed the input data in the format of a graph and as such have the capability to enhance underlying correlations within events. Transformer neural networks are a particular extension of graph-based neural networks proposed only in 2017 which could also provide helpful in this case. Another option would be to build upon some of the work done within the field of computer vision and see if image segmentation networks can help solve this problem. There are a range of available options and this project includes the freedom for the student to choose particular types of networks, but more explicit guidance could be provided in case it is desired.

In this project the student will develop and compare the performance of various machine learning models to initially reconstruct tracks from simplified test data. Upon successful completion of this, simulated data from the actual ATLAS detector can be analysed as well in the scope of this project. The student will need some familiarity with programming in python and an interest in machine learning, but a physics background is not required. In this project the student will be able to contribute to fundamental physics research and will familiarize themselves with state-of-the-art machine learning models.

Contact: Zef Wolffs, Matouš Vozák and Ivo van Vulpen

ATLAS: New machine learning approaches to target Higgs interference signatures in LHC data

In this project we aim to improve an ongoing analysis to determine the lifetime of the Higgs Boson through state-of-the-art machine learning techniques, in particular by addressing a novel solution to an as of yet unsolved fundamental problem in modeling quantum interference. While the Higgs is an elusive particle that generally only appears in physics processes with small cross sections, its signature can be amplified in the Large Hadron Collider (LHC) through quantum interference with larger background (non-Higgs) processes. This is the effect that the Higgs’ lifetime analysis relies on to be able to measure the relevant Higgs signature. A fundamental physics modelling problem arises though in the simulation of individual events for this interference due to the fact that these events are in reality described by a superposition of underlying Higgs and non-Higgs processes.

Since machine learning models in particle physics are typically trained to characterise individual physics events, the fact that interference events cannot currently be generated is a significant problem when interference is the target. In the currently existing Higgs lifetime analysis, a machine learning model was trained which instead focuses only on the explicit Higgs-mediated processes as a proxy, which is suboptimal. The aim of this project is to improve upon this current machine learning strategy used in this analysis by implementing either of the inference-aware approaches suggested in [1] and [2]. The idea behind these inference-aware machine learning algorithms is that they do not optimise for a simplified goal such as the loss function which is common in traditional machine learning, but rather for the end-goal of the analysis. In this case, this would omit the need for interference event generation altogether and allow the machine learning models to be trained optimally regardless.

The first checkpoint of this project is to use either of the frameworks used in [1] and [2] (which are both publicly available) and run them with a simplified dataset from the aforementioned analysis. After this proof-of-principle is achieved, the next goal would be to actually implement the newly developed machine learning models in the full analysis and to determine the improvement upon the existing result. A successful completion of these tasks would not only benefit the Higgs lifetime analysis, but would be an important stepping stone to future developments to make machine learning approaches also aware of other hard to model effects such as systematic uncertainties. Finally, there are further options to improve this analysis such as the generation of actual interference training data, which could be attempted in case the primary project finishes earlier than expected.

[1] De Castro, P., & Dorigo, T. (2019). INFERNO: inference-aware neural optimisation. Computer Physics Communications, 244, 170-179.

[2] Simpson, N., & Heinrich, L. (2023, February). neos: End-to-end-optimised summary statistics for high energy physics. In Journal of Physics: Conference Series (Vol. 2438, No. 1, p. 012105). IOP Publishing.

Contact: Zef Wolffs, Matouš Vozák and Ivo van Vulpen

ATLAS: Development of state-of-the art modeling techniques to generate Higgs interference events

In this project we aim to improve an ongoing analysis to determine the lifetime of the Higgs Boson through new event generation strategies, in particular by addressing a novel solution to an as of yet unsolved fundamental problem in modeling quantum interference. While the Higgs is an elusive particle that generally only appears in physics processes with small cross sections, its signature can be amplified in the Large Hadron Collider (LHC) through quantum interference with larger background (non-Higgs) processes. This is the effect that the Higgs’ lifetime analysis relies on to be able to measure the relevant Higgs signature. A fundamental physics modelling problem arises though in the simulation of individual events for this interference due to the fact that these events are in reality described by a superposition of underlying Higgs and non-Higgs processes.

The current approach to deal with this problem is to ignore the interference in analysis optimization and instead optimize only for explicitly Higgs mediated processes, but this severely impacts analysis performance. In the context of Effective Field Theories (EFT) however, a similar problem arises and has been solved for simple (leading order) processes. In this project we plan to take the machinery developed for EFT and apply it to the Higgs lifetime analysis. Furthermore, with the recent development of a Next-to-Leading Order (NLO) Higgs event generation tool [1] a subsequent goal would be to use this to also generate interference at the NLO level. Successful completion of this project would lead to a much improved analysis result, significantly constraining the lifetime of the Higgs Boson. Besides, the techniques developed would almost certainly be used in future analyses on Large Hadron Collider (LHC) run 3 data.

[1] Alioli, S., Ravasio, S. F., Lindert, J. M., & Röntsch, R. (2021). Four-lepton production in gluon fusion at NLO matched to parton showers. The European Physical Journal C, 81(8), 687.

Contact: Zef Wolffs, Matouš Vozák, Bryan Kortman and Ivo van Vulpen

ATLAS: Approaching the Higgs from a new direction: Constraining new physics with off shell Higgs data from the LHC

The Heisenberg uncertainly principle allows for all elementary particles---including the Higgs Boson---to momentarily disobey the fundamental energy-momentum relation, allowing the particle in question to have a significantly larger mass than usual. A description of the Higgs Boson in this state (“off shell Higgs Boson”) can provide a portal to the discovery of potential new physics, albeit very difficult to do due to its infrequent appearance. The goal of this project is to constrain or hint at new physics by estimating parameters of a generalized model which allows for new physics, Effective Field Theory (EFT), using off shell Higgs data.

Most of the underlying analysis to measure the prevalence of off shell Higgs bosons has already been set up, so the goal of this project is to do the aforementioned EFT interpretation on top of this existing analysis. From a theoretical point of view much of the groundwork has also been done on simulated data which showed the potential for this EFT interpretation to constrain new physics [1]. Being on the interface between experimental and theoretical physics this project allows the student to gain a deeper understanding of both, furthermore its successful completion could be one of the first hints towards as of yet not understood physics.

[1] Azatov, A., de Blas, J., Falkowski, A., Gritsan, A. V., Grojean, C., Kang, L., ... & Vryonidou, E. (2022). Off-shell Higgs Interpretations Task Force: Models and Effective Field Theories Subgroup Report. arXiv preprint arXiv:2203.02418.

Contact: Zef Wolffs, Matouš Vozák, Bryan Kortman and Ivo van Vulpen

ATLAS: A new timing detector - the HGTD

The ATLAS is going to get a new ability: a timing detector. This allows us to reconstruct tracks not only in the 3 dimensions of space but adds the ability of measuring very precisely also the time (at picosecond level) at which the particles pass the sensitive layers of the HGTD detector. This allows to construct the trajectories of the particles created at the LHC in 4 dimensions and ultimately will lead to a better reconstruction of physics at ATLAS. The new HGTD detector is still in construction and work needs to be done on different levels such as understanding the detector response (taking measurements in the lab and performing simulations) or developing algorithms to reconstruct the particle trajectories (programming and analysis work).

Several projects are available within the context of the new HGTD detector:

- One can choose to either focus on the impact on physics analysis performance by studying how the timing measurements can be included in the reconstruction of tracks, and what effect this has on how much better we can understand the physical processes occurring in the particles produced in the LHC collisions. With this work you will be part of the Atlas group at Nikhef.

- The second possibility is to test the sensors in our lab and in test-beam setups at CERN. The analysis performed will be in context of the ATLAS HGTD test beam group in connection to both the Atlas group and the R&D department at Nikhef.

- The third is to contribute in an ongoing effort to precisely simulate/model he silicon avalanche detectors in the Allpix2 frameword. There are several models that try to describe the detectors response. There are several dependencies to operation temperature, field strenghts and radiation damage. We are getting close in being able to model our detector - but not there yet. This work will be within the ATLAS group together with Hella Snoek and Andrea Visibile

If you are interested, contact me to discuss the possibilities. Contact: Hella Snoek

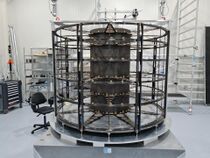

ATLAS: The next full-silicon Inner Tracker: ITk

The inner detector of the present ATLAS experiment has been designed and developed to function in the environment of the present Large Hadron Collider (LHC). At the ATLAS Phase-II Upgrade, the particle densities and radiation levels will exceed current levels by a factor of ten. The instantaneous luminosity is expected to reach unprecedented values, resulting in up to 200 proton-proton interactions in a typical bunch crossing. The new detectors must be faster and they need to be more highly segmented. The sensors used also need to be far more resistant to radiation, and they require much greater power delivery to the front-end systems. At the same time, they cannot introduce excess material which could undermine tracking performance. For those reasons, the inner tracker of the ATLAS detector (ITk) was redesigned and will be rebuilt completely.

Nikhef is one of the sites in charge of building and integrating some big parts of ITk. One of the next steps consists of testing the sensors that we will install in the structures we have built (check one of the structures in the picture of our cleanroom). This project offers the possibility of working on a full hardware project, doing something completely new, by testing the sensors of a future component of the next ATLAS detector.

Contact: Andrea García Alonso

Cosmic Rays/Neutrinos: Seasonal muon flux variations and the pion/kaon ratio

The KM3NeT ARCA and ORCA detectors, located kilometers deep in the Mediterranean Sea, have neutrinos as primary probes. Muons from cosmic ray interactions reach the detectors in relatively large quantities too. These muons, exploiting the capabilities and location of the detectors allow the study of cosmic rays and their interactions. In this way, questions about their origin, type, propagation can be addressed. In particular these muons are tracers of hadronic interactions at energies inaccessible at particle accelerators.

The muons reaching the depths of the detectors result from decays of mesons, mostly pions and kaons, created in interactions of high-energy cosmic rays with atoms in the upper atmosphere. Seasonal changes of the temperature – and thus density - profile of the atmosphere modulate the balance between the probability for these mesons to decay (producing muons) or to re-interact. Pions and kaons are affected differently, allowing to extract their production ratio by determining how changes in muon rate depend on changes in the effective temperature – an integral over the atmospheric temperature profile weighted by a depth dependent meson production rate.

In this project, the aim is to measure the rate of muons in the detectors and to calculate the effective temperature above the KM3NeT detectors from atmospheric data, both as function of time. The relation between these two can be used to extract the pion to kaon ratio.

Contact: Ronald Bruijn

Detector R&D: Studies of wafer-scale sensors for ALICE detector upgrade and beyond

One of the biggest milestones of the ALICE detector upgrade (foreseen in 2026) is the implementation of wafer-scale (~ 28 cm x 18 cm) monolithic silicon active pixel sensors in the tracking detector, with the goal of having truly cylindrical barrels around the beam pipe. To demonstrate such an unprecedented technology in high energy physics detectors, few chips will be soon available in Nikhef laboratories for testing and characterization purposes. The goal of the project is to contribute to the validation of the samples against the ALICE tracking detector requirements, with a focus on timing performance in view of other applications in future high energy physics experiments beyond ALICE. We are looking for a student with a focus on lab work and interested in high precision measurements with cutting-edge instrumentation. You will be part of the Nikhef Detector R&D group and you will have, at the same time, the chance to work in an international collaboration where you will report about the performance of these novel sensors. There may even be the opportunity to join beam tests at CERN or DESY facilities. Besides interest in hardware, some proficiency in computing is required (Python or C++/ROOT).

Contact: Jory Sonneveld , Roberto Russo

Detector R&D: Time resolution of monolithic silicon detectors

Monolithic silicon detectors based on industrial Complementary Metal Oxide Semiconductor (CMOS) processes offer a promising approach for large scale detectors due to their ease of production and low material budget. Until recently, their low radiation tolerance has hindered their applicability in high energy particle physics experiments. However, new prototypes ~~such as the one in this project~~ have overcome these hurdles, making them feasible candidates for future experiments in high energy particle physics. Achieving the required radiation tolerance has brought the spatial and temporal resolution of these detectors to the forefront. In this project, you will investigate the temporal performance of a radiation hard monolithic detector prototype, using laser setups in the laboratory. You will also participate in meetings with the international collaboration working on this detector, where you will report on the prototype's performance. Depending on the progress of the work, there may be a chance to participate in test beams performed at the CERN accelerator complex and a first full three dimensional characterization of the prototypes performance using a state-of-the-art two-photon absorption laser setup at Nikhef. This project is looking for someone interested in working hands on with cutting edge detector and laser systems at the Nikhef laboratory. Python programming skills and linux experience are an advantage.

Contact: Jory Sonneveld, Uwe Kraemer

Detector R&D: Improving a Laser Setup for Testing Fast Silicon Pixel Detectors

For the upgrades of the innermost detectors of experiments at the Large Hadron Collider in Geneva, in particular to cope with the large number of collisions per second from 2027, the Detector R&D group at Nikhef tests new pixel detector prototypes with a variety of laser equipment with several wavelengths. The lasers can be focused down to a small spot to scan over the pixels on a pixel chip. Since the laser penetrates the silicon, the pixels will not be illuminated by just the focal spot, but by the entire three dimensional hourglass or double cone like light intensity distribution. So, how well defined is the volume in which charge is released? And can that be made much smaller than a pixel? And, if so, what would the optimum focus be? For this project the student will first estimate the intensity distribution inside a sensor that can be expected. This will correspond to the density of released charge within the silicon. To verify predictions, you will measure real pixel sensors for the LHC experiments. This project will involve a lot of 'hands on work' in the lab and involve programming and work on unix machines.

Contact: Martin Fransen

Detector R&D: Time resolution of hybrid pixel detectors with the Timepix4 chip

Precise time measurements with silicon pixel detectors are very important for experiments at the High-Luminosity LHC and the future circular collider. The spatial resolution of current silicon trackers will not be sufficient to distinguish the large number of collisions that will occur within individual bunch crossings. In a new method, typically referred to as 4D tracking, spatial measurements of pixel detectors will be combined with time measurements to better distinguish collision vertices that occur close together. New sensor technologies are being explored to reach the required time measurement resolution of tens of picoseconds, and the results are promising. However, the signals that these pixelated sensors produce have to be processed by front-end electronics, which hence also play a role in the total time resolution of the detector. An important contribution comes from the systematic differences between the front-end electronics of different pixels. Many of these systematic effects can be corrected by performing detailed calibrations of the readout electronics. To achieve the required time resolution at future experiments, it is vital that these effects are understood and corrected. In this project you will be working with the Timepix4 chip. This is a so-called application specific integrated circuit (ASIC) that is designed to read out pixelated sensors. This ASIC will be used extensively in detector R&D for the characterisation of new sensor technologies requiring precise timing (< 50 ps). In order to do so, it is necessary to first study the systematic differences between the pixels, which you will do using a laser setup in our lab. This will be combined with data analysis of proton beam measurements, or with measurements performed using the built-in test-pulse mechanism of the Timepix4 ASIC. Your work will enable further research performed with this ASIC, and serve as input to the design and operation of future ASICs for experiments at the High-Luminosity LHC.

Contact: Kevin Heijhoff and Martin van Beuzekom

Detector R&D: Performance studies of Trench Isolated Low Gain Avalanche Detectors (TI-LGAD)

The future vertex detector of the LHCb Experiment needs to measure the spatial coordinates and time of the particles originating in the LHC proton-proton collisions with resolutions better than 10 um and 50 ps, respectively. Several technologies are being considered to achieve these resolutions. Among those is a novel sensor technology called Trench Isolated Low Gain Avalanche Detector. Prototype pixelated sensors have been manufactured recently and have to be characterised. Therefore these new sensors will be bump bonded to a Timepix4 ASIC which provides charge and time measurements in each of 230 thousand pixels. Characterisation will be done using a lab setup at Nikhef, and includes tests with a micro-focused laser beam, radioactive sources, and possibly with particle tracks obtained in a test-beam. This project involves data taking with these new devices and analysing the data to determine the performance parameters such as the spatial and temporal resolution. as function of temperature and other operational conditions.

Contacts: Kazu Akiba and Martin van Beuzekom

Detector R&D: A Telescope with Ultrathin Sensors for Beam Tests

To measure the performance of new prototypes for upgrades of the LHC experiments and beyond, typically a telescope is used in a beam line of charged particles that can be used to compare the results in the prototype to particle tracks measured with this telescope. In this project, you will continue work on a very lightweight, compact telescope using ALICE PIxel DEtectors (ALPIDEs). This includes work on the mechanics, data acquisition software, and a moveable stage. You will foreseeably test this telescope in the Delft Proton Therapy Center. If time allows, you will add a timing plane and perform a measurement with one of our prototypes. Apart from travel to Delft, there is a possiblity to travel to other beam line facilities.

Contact: Jory Sonneveld

Detector R&D: Laser Interferometer Space Antenna (LISA) - the first gravitational wave detector in space

The space-based gravitational wave antenna LISA is one of the most challenging space missions ever proposed. ESA plans to launch around 2034 three spacecraft separated by a few million kilometres. This constellation measures tiny variations in the distances between test-masses located in each satellite to detect gravitational waves from sources such as supermassive black holes. LISA is based on laser interferometry, and the three satellites form a giant Michelson interferometer. LISA measures a relative phase shift between one local laser and one distant laser by light interference. The phase shift measurement requires sensitive sensors. The Nikhef DR&D group fabricated prototype sensors in 2020 together with the Photonics industry and the Dutch institute for space research SRON. Nikhef & SRON are responsible for the Quadrant PhotoReceiver (QPR) system: the sensors, the housing including a complex mount to align the sensors with 10's of nanometer accuracy, various environmental tests at the European Space Research and Technology Centre (ESTEC), and the overall performance of the QPR in the LISA instrument. Currently we are discussing possible sensor improvements for a second fabrication run in 2022, optimizing the mechanics and preparing environmental tests. As a MSc student, you will work on various aspects of the wavefront sensor development: study the performance of the epitaxial stacks of Indium-Gallium-Arsenide, setting up test benches to characterize the sensors and QPR system, performing the actual tests and data analysis, in combination with performance studies and simulations of the LISA instrument.

Contact: Niels van Bakel

Detector R&D: Other projects

Are you looking for a slightly different project? Are the above projects already taken? Are you coming in at an unusual time of the year? Do not hesitate to contact us! We always have new projects coming up at different times in the year and we are open to your ideas.

Contact: Jory Sonneveld

FCC: The Next Collider

After the LHC, the next planned large collider at CERN is the proposed 100 kilometer circular collider "FCC". In the first stage of the project, as a high-luminosity electron-positron collider, precision measurements of the Higgs boson are the main goal. One of the channels that will improve by orders of magnitude at this new accelerator is the decay of the Higgs boson to a pair of charm quarks. This project will estimate a projected sensitivity for the coupling of the Higgs boson to second generation quarks, and in particular target the improved reconstruction of the topology of long-lived mesons in the clean environment of a precision e+e- machine.

Contact: Tristan du Pree

Gravitational Waves: Computer modelling to design the laser interferometers for the Einstein telescope

A new field of instrument science led to the successful detection of gravitational waves by the LIGO detectors in 2015. We are now preparing the next generation of gravitational wave observatories, such as the Einstein Telescope, with the aim to increase the detector sensitivity by a factor of ten, which would allow, for example, to detect stellar-mass black holes from early in the universe when the first stars began to form. This ambitious goal requires us to find ways to significantly improve the best laser interferometers in the world.

Gravitational wave detectors, such as LIGO and VIRGO, are complex Michelson-type interferometers enhanced with optical cavities. We develop and use numerical models to study these laser interferometers, to invent new optical techniques and to quantify their performance. For example, we synthesize virtual mirror surfaces to study the effects of higher-order optical modes in the interferometers, and we use opto-mechanical models to test schemes for suppressing quantum fluctuations of the light field. We can offer several projects based on numerical modelling of laser interferometers. All projects will be directly linked to the ongoing design of the Einstein Telescope.

Contact: Andreas Freise

LHCb: Search for light dark particles

The Standard Model of elementary particles does not contain a proper Dark Matter candidate. One of the most tantalizing theoretical developments is the so-called Hidden Valley models: a mirror-like copy of the Standard Model, with dark particles that communicate with standard ones via a very feeble interaction. These models predict the existence of dark hadrons – composite particles that are bound similarly to ordinary hadrons in the Standard Model. Such dark hadrons can be abundantly produced in high-energy proton-proton collisions, making the LHC a unique place to search for them. Some dark hadrons are stable like a proton, which makes them excellent Dark Matter candidates, while others decay to ordinary particles after flying a certain distance in the collider experiment. The LHCb detector has a unique capability to identify such decays, particularly if the new particles have a mass below ten times the proton mass.

This project assumes a unique search for light dark hadrons that covers a mass range not accessible to other experiments. It assumes an interesting program on data analysis (python-based) with non-trivial machine learning solutions and phenomenology research using fast simulation framework. Depending on the interest, there is quite a bit of flexibility in the precise focus of the project.

Contact: Andrii Usachov

LHCb: Searching for dark matter in exotic six-quark particles

Three quarters of the mass in the Universe is of unknown type. Many hypotheses about this dark matter have been proposed, but none confirmed. Recently it has been proposed that it could be made of particles made of the six quarks uuddss, which would be a Standard-Model solution to the dark matter problem. This idea has recently gained credibility as many similar multi-quarks states are being discovered by the LHCb experiment. Such a particle could be produced in decays of heavy baryons, or directly in proton-proton collisions. The anti-particle, made of six antiquarks, could be seen when annihilating with detector material. It is also proposed to use Xi_b baryons produced at LHCb to search for such a state where the state would appear as missing 4-momentum in a kinematically constrained decay. The project consists in defining a selection and applying it to LHCb data. See arXiv:2007.10378.

Contact: Patrick Koppenburg

LHCb: Measuring lepton flavour universality with excited Ds states in semileptonic Bs decays

One of the most striking discrepancies between the Standard Model and measurements are the lepton flavour universality (LFU) measurements with tau decays. At the moment, we have observed an excess of 3-4 sigma in B → Dτν decays. This could point even to a new force of nature! To understand this discrepancy, we need to make further measurements.

One very exciting (pun intended) projects to verify these discrepancies involves measuring the Bs → Ds2*τν and/or Bs → Ds1*τν decays. These decays with excited states of the Ds meson have not been observed before in the tau decay mode, and have a unique way of coupling to potential new physics candidates that can only be measured in Bs decays [1]. See slides for more detail: File:LHCbLFUwithExcitedDs.pdf

[1] https://arxiv.org/abs/1606.09300

Contact: Suzanne Klaver

LHCb: New physics in the angular distributions of B decays to K*ee